Ok, first a quick recap on SmartTests:

SmartTests are plain playwright scripts, with intent comments before steps, that enables hybrid execution (fallback to agent mode execution when needed).

SmartTests are used by ExploreChimp to guide its explorations in pre-defined pathways, along which it identifies UX issues of the webapp such as performance, visual glitches, usability, content and more.

The Challenge: Context for Bugs

When ExploreChimp finds bugs, it tags them with the “Screen” and “State” where they were captured. This context helps with troubleshooting and understanding when issues occur.

- A Screen is a conceptual view of your application: Dashboard, Homepage, Shopping Cart, etc.

- A State represents a specific situation within that screen: Empty Cart vs Cart with Items, Logged In vs Logged Out, etc.

ExploreChimp autonomously determines current screen and state based on the steps taken and the current screenshot. While this makes getting started easier, it may not always align with your mental model / the granularity you want things tracked at.

The Solution: Screen-State Annotations

Now you can add explicit screen-state markers directly in your SmartTest scripts. These annotations tell ExploreChimp exactly which screen and state the app is at at a given point in the test, ensuring bugs are tagged with the context you care about.

How It Works

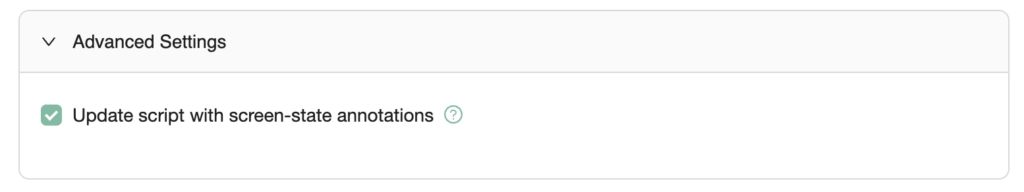

After ExploreChimp runs, if the script didn’t contain screen-state markers, it updates the script with screen-state annotations it determined during the walk. If you don’t want agent to update the script, you can turn it off by unchecking “Update script with screen-state annotations” under Advanced Settings (in the Exploration config wizard).

You can edit these annotations to match your conceptual model. For example, you may want to track UX bugs for “Cart with out-of-stock items” vs “Cart with in-stock items.” instead of the agent suggested states.

On the next run, ExploreChimp uses your annotations instead of guessing, so bugs are tagged consistently with your terminology.

Here is an example of a SmartTest with screen-state annotations:

test('Shopping Cart Flow', async ({ page }) => {

// Navigate to homepage

await page.goto('https://example.com');

// @Screen: Homepage @State: Default

// Search for a product

await page.getByPlaceholder('Search products').fill('laptop');

await page.getByRole('button', { name: 'Search' }).click();

// @Screen: Search Results @State: With Results

// Add item to cart

await page.getByRole('link', { name: /laptop/i }).first().click();

await page.getByRole('button', { name: 'Add to Cart' }).click();

// @Screen: Shopping Cart @State: Cart with Items

// Proceed to checkout

await page.getByRole('button', { name: 'Proceed to Checkout' }).click();

// @Screen: Checkout @State: Payment Step

});

Benefits

- Consistent bug tagging: Bugs are tagged consistantly using your terminology, not AI-generated labels.

- Better organization: View bugs by screen-state in Atlas → SiteMap with your own categories.

- Easy refinement: Edit annotations to match your mental model easily – no need to retrain or reconfigure.

Getting Started

- Run ExploreChimp on your SmartTest (annotations are added automatically).

- Review and edit the annotations in your script to match your terminology.

- The next time ExploreChimp is run on that test, it will use your annotations for consistent bug tagging.

The annotations are simple comments, so they don’t affect test execution – they’re purely for ExploreChimp’s context understanding.